Up to 32GB of 3D-stacked memory with 750GB/s of bandwidth

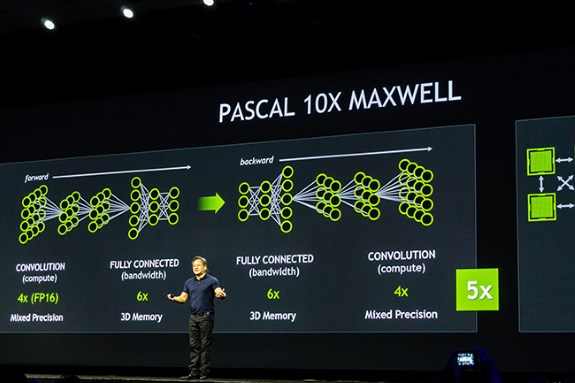

Speaking to the crowd at the GTC, NVIDIA CEO Jen-Hsun Huang estimated that the future 16nm Pascal GPU will be up to ten times faster than Maxwel in terms of deep learning performance. Pascal will offer up to 32GB of 3D-stacked memory, mixed-precision computing support and NVIDIA's NVLink high-speed interconnect when it launches in 2016.

The use of 3D memory promises to more than double the memory bandwidth compared to what we see on Maxwell today (to 750GB/s) while mixed-precision computing provides a big performance boost for tasks that don't need 32-bit floating point accuracy. NVIDIA claims mixed-precision computing will be highly beneficial for deep learning applications as the chip can process twice as fast in fp16 mode. NVLink on the other hand is a new enterprise-class interconnect system for GPUs that promises to deliver more than 5x the bandwidth of current PCI Express implementations.

Mixed-Precision Computing – for Greater AccuracyWhat about gaming performance?

Mixed-precision computing enables Pascal architecture-based GPUs to compute at 16-bit floating point accuracy at twice the rate of 32-bit floating point accuracy.

Increased floating point performance particularly benefits classification and convolution – two key activities in deep learning – while achieving needed accuracy.

3D Memory – for Faster Communication Speed and Power Efficiency

Memory bandwidth constraints limit the speed at which data can be delivered to the GPU. The introduction of 3D memory will provide 3X the bandwidth and nearly 3X the frame buffer capacity of Maxwell. This will let developers build even larger neural networks and accelerate the bandwidth-intensive portions of deep learning training.

Pascal will have its memory chips stacked on top of each other, and placed adjacent to the GPU, rather than further down the processor boards. This reduces from inches to millimeters the distance that bits need to travel as they traverse from memory to GPU and back. The result is dramatically accelerated communication and improved power efficiency.

NVLink – for Faster Data Movement

The addition of NVLink to Pascal will let data move between GPUs and CPUs five to 12 times faster than they can with today’s current standard, PCI-Express. This is greatly benefits applications, such as deep learning, that have high inter-GPU communication needs.

NVLink allows for double the number of GPUs in a system to work together in deep learning computations. In addition, CPUs and GPUs can connect in new ways to enable more flexibility and energy efficiency in server design compared to PCI-E.

What this all means for gaming performance is somewhat of a mystery but NVIDIA did mention that Pascall promises 2x the performance per Watt than Maxwell in single-precision computing. Also keep in mind that the 32GB memory variant is for the GPU compute versions of Pascal, the consumer gaming cards will have a lot less memory. We'll keep you up to date on DV Hardware when more info rolls in but don't expect much soon as these cards are still some time away.

Volta in 2018

NVIDIA's GTC 2015 slides also mention Volta, not a lot of details were mentioned but the slides seem to suggest that Volta may offer a memory bandwidth of up to 900GB/s and up to 64GB of memory capacity when it launches in 2018.