The SSE5 instruction set AMD presented almost two years ago has been dumped, but the chip maker said some features of SSE5 will live on in three new extensions: XOP (for eXtended Operations), CVT16 (half-precision floating point converts), and FMA4 (four-operand Fused Multiply/Add).

With this duplication of functionality between SSE5 and AVX/FMA, and AVX's additional features, we felt the right thing to do was to support AVX. In our minds, a more unified instruction set is clearly what's best for developers and the x86 software industry. With our acceptance of AVX, a key aspect of this instruction set unification is the stability of the specification. Since we don't control the definition of AVX, all we can say for sure is that we expect our initial products to be compatible with version 5 of the specification (the most recent one, as of this writing, published in January of 2009), except for the FMA instructions, which we expect will be compatible with version 3 (published in August of 2008).Here's a look at the features of AMD's XOP extension:

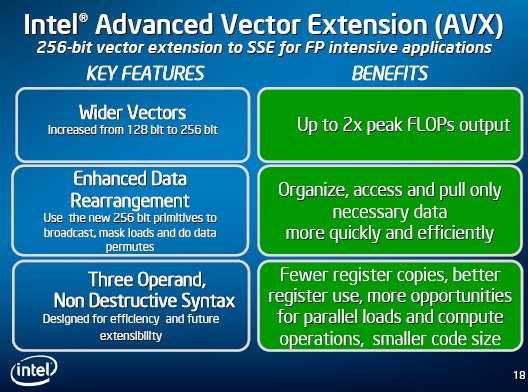

Why the FMA difference? This was not something we did lightly. In December of 2008, Intel made significant changes to the FMA definition, which we found we could not accommodate without unacceptable risk to our product schedules. Yet we did not want to deprive customers of the significant performance benefits of FMA. So we decided to stick with the earlier definition, renaming it FMA4 (for four-operand FMA - Intel's newer definition uses what we believe to be a less capable three-operand, destructive-destination format). It will have a different CPUID feature flag from Intel's FMA extension. At some future point, we will likely adopt Intel's newer FMA definition as well, coexisting with FMA4. But as you might imagine, we may wait until we're sure the specification is stable.

The fact remains that AVX does not incorporate all of SSE5's features. Since SSE5 was based on months of discussions with ISVs on what sort of capabilities they felt were needed, and had been positively reviewed by the industry when we first put out the specification, we decided to follow through with development of these additional features. To do so most effectively, we redefined them in the AVX framework, resulting in the XOP extension.

Horizontal integer add/subtract: Signed or unsigned add, or signed subtract, of adjacent byte, word, or dword elements in the source vector to word, dword or qword elements of the destination vector. 128-bit. Integer multiply/accumulate: Multiplies elements of two input vectors, adding the results to a third input vector. 128-bit. Shift/rotate with per-element counts: These use a vector of shift counts, allowing each element of the source vector to be shifted or rotated by a different amount. There is also a rotate instruction with an immediate-byte single count applied to all elements. 128-bit. Integer compare: Signed and unsigned comparison of byte, word, dword and qword elements, with predicate (mask) generation as in the various SSE compare instructions. The particular comparison to perform is specified in an immediate byte. 128-bit. Byte permute: A powerful operation which copies bytes from two 16-byte input vectors to a 16-byte destination vector, optionally performing a selected transformation on each, under the control of a third input vector. 128-bit. Bit-wise conditional move: Selects each bit of the destination vector from either of two input vectors, per a third input vector. 128- and 256-bit. Fraction extract: Extract the mantissa from floating point operands. Scalar and 128- or 256-bit vector, single and double precision. Half-precision convert: These convert between half-precision and single-precision formats while loading or storing a four- or eight-element vector. They provide dynamic control of rounding and denormalized operand handling. These particular instructions form a separate extension called CVT16, with a distinct CPUID feature flag.