Project Holodeck gets teased

A lot of cool demonstration were shown, including a preview of the NVIDIA Project Holodeck, a virtual reality environment where multiple people can interact with objects. The demo showed four people interacting with the Koenigsegg Regera car in a physically accurate environment where users could do things like touch the steering wheel. It focused primarily on the professional side of things but it's easy to see potential VR gaming applications.

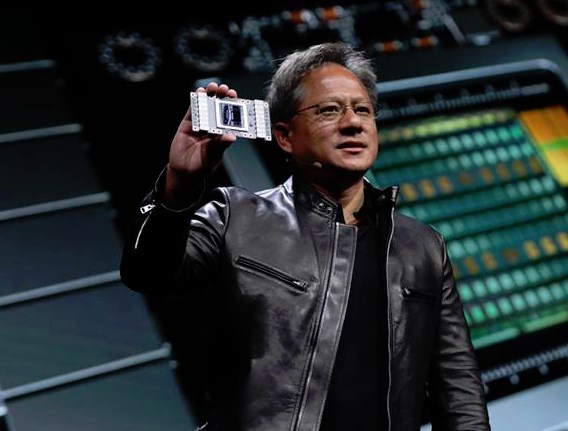

Tesla V100 is first Volta product

Probably the most exciting thing shown was the Tesla V100 datacenter card, this is the first model with NVIDIA's Volta GPU. Products with the Tesla V100 start shipping in Q3 2017 and will include the $149,000 DGX-1 deep learning rack as well as the $69,000 DGX Station.

The latter product is new in NVIDIA's product lineup, Huang claims they launched it because it was extremely popular among NVIDIA's own deep learning workers. It's basically a big desktop PC with an Intel Xeon processor and four Tesla V100 cards. This desktop supercomputer consumes up to 1500W and has its own watercooling solution to ensure low-noise operation. Unfortunately, no details were shared about consumer versions of Volta. Obviously, NVIDIA first wants to get these datacenter products out of the door as they generate a lot higher profit than the gaming models.

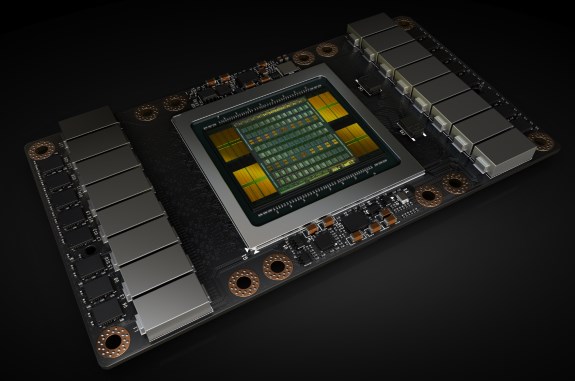

Here we have Huang showing off the flagship Tesla V100 "Volta" GPU. This is a truly massive chip with a huge die area of 815mm² containing a total of 21 billion transistors! Huang claims the development of the Volta GPU required an investment of $3 billion. It's the largest GPU ever created and it features 5120 CUDA cores clocked at 1455MHz and a 4096-bit memory bus with 16GB HBM2.

One of the new things in Volta is the inclusion of Tensor cores, the 640 tensor cores promise 120 teraflops of performance for tensor machine learning applications. For single-precision and double-precision workloads, the Tesla V100 promises to be about 50 percent faster than the Pascal-based Tesla P100. FP32 performance is rated at 15 teraflops, versus 10.6 teraflops for the Tesla P100.

The card has a memory bandwidth of 900GB/s, it uses the NVLink 2.0 interface and has a TDP of 300W. Also very interesting is that NVIDIA confirmed this Volta chip will be manufactured on a TSMC 12nm FFN high performance manufacturing process customized for NVIDIA, which is basically an enhanced version of the foundry's 16nm node. Lots of technical details about Volta can be found at the NVIDIA developer blog.

NVIDIA (NASDAQ: NVDA) today launched Volta™ -- the world's most powerful GPU computing architecture, created to drive the next wave of advancement in artificial intelligence and high performance computing.And lots of other stuff...

The company also announced its first Volta-based processor, the NVIDIA® Tesla® V100 data center GPU, which brings extraordinary speed and scalability for AI inferencing and training, as well as for accelerating HPC and graphics workloads.

"Artificial intelligence is driving the greatest technology advances in human history," said Jensen Huang, founder and chief executive officer of NVIDIA, who unveiled Volta at his GTC keynote. "It will automate intelligence and spur a wave of social progress unmatched since the industrial revolution.

"Deep learning, a groundbreaking AI approach that creates computer software that learns, has insatiable demand for processing power. Thousands of NVIDIA engineers spent over three years crafting Volta to help meet this need, enabling the industry to realize AI's life-changing potential," he said.

Volta, NVIDIA's seventh-generation GPU architecture, is built with 21 billion transistors and delivers the equivalent performance of 100 CPUs for deep learning.

It provides a 5x improvement over Pascal™, the current-generation NVIDIA GPU architecture, in peak teraflops, and 15x over the Maxwell™ architecture, launched two years ago. This performance surpasses by 4x the improvements that Moore's law would have predicted.

Demand for accelerating AI has never been greater. Developers, data scientists and researchers increasingly rely on neural networks to power their next advances in fighting cancer, making transportation safer with self-driving vehicles, providing new intelligent customer experiences and more.

Data centers need to deliver exponentially greater processing power as these networks become more complex. And they need to efficiently scale to support the rapid adoption of highly accurate AI-based services, such as natural language virtual assistants, and personalized search and recommendation systems.

Volta will become the new standard for high performance computing. It offers a platform for HPC systems to excel at both computational science and data science for discovering insights. By pairing CUDA® cores and the new Volta Tensor Core within a unified architecture, a single server with Tesla V100 GPUs can replace hundreds of commodity CPUs for traditional HPC.

Breakthrough Technologies

The Tesla V100 GPU leapfrogs previous generations of NVIDIA GPUs with groundbreaking technologies that enable it to shatter the 100 teraflops barrier of deep learning performance.

They include:

Tensor Cores designed to speed AI workloads. Equipped with 640 Tensor Cores, V100 delivers 120 teraflops of deep learning performance, equivalent to the performance of 100 CPUs. New GPU architecture with over 21 billion transistors. It pairs CUDA cores and Tensor Cores within a unified architecture, providing the performance of an AI supercomputer in a single GPU. NVLink™ provides the next generation of high-speed interconnect linking GPUs, and GPUs to CPUs, with up to 2x the throughput of the prior generation NVLink. 900 GB/sec HBM2 DRAM, developed in collaboration with Samsung, achieves 50 percent more memory bandwidth than previous generation GPUs, essential to support the extraordinary computing throughput of Volta. Volta-optimized software, including CUDA, cuDNN and TensorRT™ software, which leading frameworks and applications can easily tap into to accelerate AI and research.

Also on display was the NVIDIA Isaac robot simulator, a new tool to simplify the work of building and training intelligent robots and machines. Additionally, NVIDIA teased the upcoming NVIDIA GPU Cloud, a service to give machine learning developers easy access to NVIDIA DGX systems in the cloud. The service will run all commonly used deep learning software stacks and is built to run anywhere. Pricing will be revealed at a later date, a public beta will open this summer.

Last but not least, NVIDIA self-driving car technology received another huge design win as Toyota announced it will use the NVIDIA Drive PX platform to create autonomous driving systems.